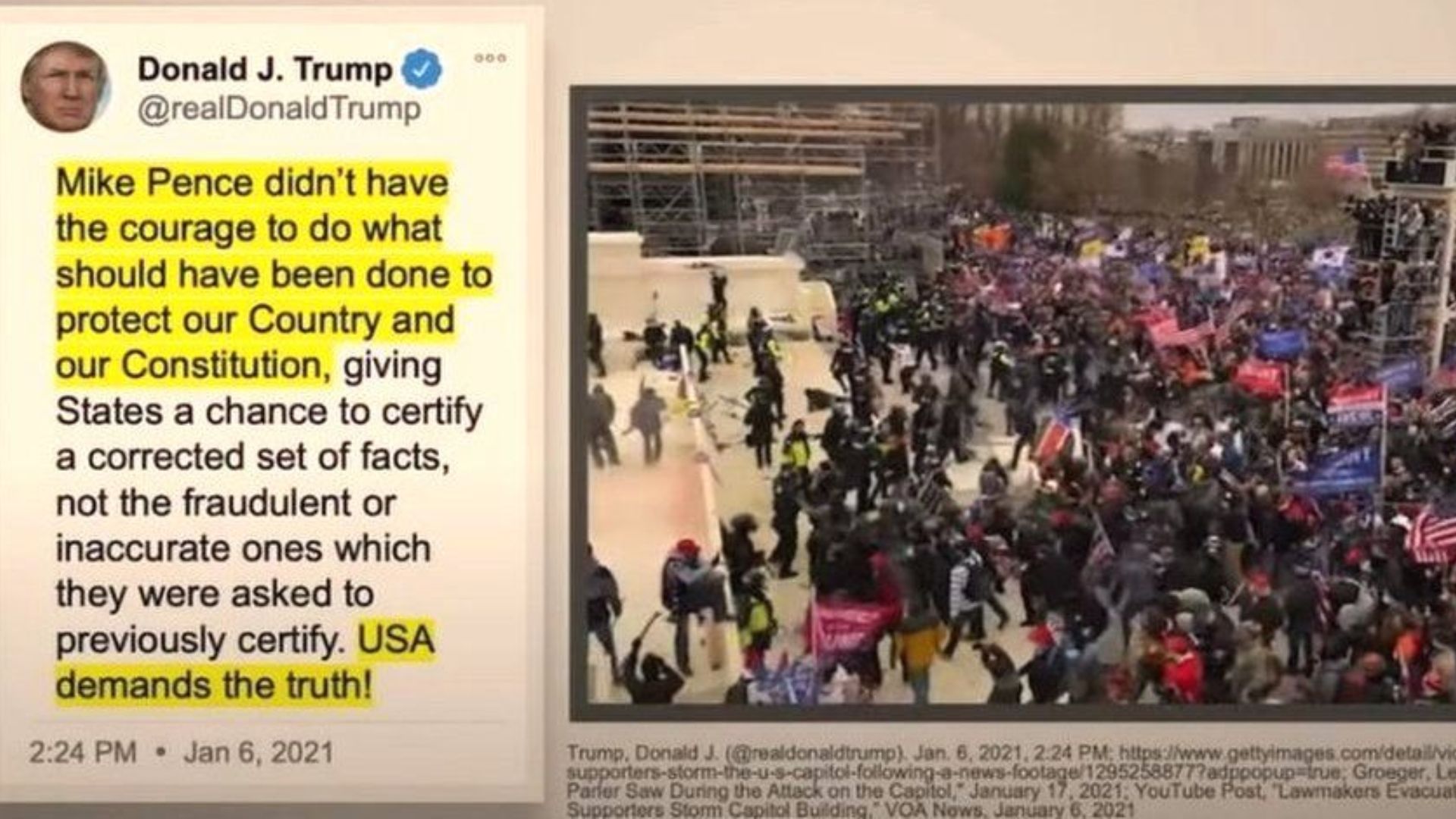

Social media platforms with significant reach or impact in Singapore is now within the reach of the long arm of the law. They can no longer post shocking and harmful content and get away with it.

Once when the Online Safety (Miscellaneous Amendments) Bill becomes law, posting contents such as those advocating suicide or self-harm, physical or sexual violence and terrorism, child sexual exploitation, public health risk, and content likely to cause racial and religious disharmony in Singapore will put social media and online platforms at risk of paying $1m in fines or blocked in the island republic.

The Bill, tabled for the first reading in Parliament on 3 Oct, is aimed at major social media firms such as Facebook, Instagram and TikTok. It will empower the Infocomm Media Development Authority (IMDA) to block or take down egregious content on these sites in the event that it is accessed by local users on major social media platforms. IMDA will also require these organisations to mitigate the risks of being exposed to harmful content.

These orders will not be issued for private communications.

Platforms with “significant reach or impact” in Singapore may be designated as “regulated online communication services”, and required to comply with their own Code of Practice for Online Safety expected to be in full strength by the second half of 2023.

Proposed measures under the draft code issued on Monday had received support from the public after a month-long consultation that ended in August. Under the code, regulated online platforms will need to establish and apply measures to prevent users, particularly children under 18 years old, from accessing harmful content.

These measures include tools that allow children or parents to manage their safety on these services and these social media firms have to provide practical guidance on what content presents a risk of harm to users and ways for users to report harmful content and unwanted interactions.

Social media platforms are expected to be transparent about how they are protecting local users from harmful content, by providing information that reflects users’ experience on their services to allow users to make informed decisions.

Parliament will debate on the Bill at a second reading in November.

RELATED: Social media and the government: Tango for transparency and accountability

Join the conversations on TheHomeGround Asia’s Facebook and Instagram, and get the latest updates via Telegram.